Beyond Context Graphs: How Ontology, Semantics, and Knowledge Graphs Define Context. The Year of the Graph Newsletter Vol. 30, Spring 2026

What are context graphs, what are they good for, and why are they dubbed AI’s trillion-dollar opportunity? What does context mean actually, and how can we define context using graphs and ontologies? And how can different types of graphs and graph technologies power AI?

Gartner highlighted Data Management, Semantic Layers, and GraphRAG as Top Trends in Data and Analytics for 2026. Startups and incumbents in the graph technology space are making progress, while graph is becoming the fastest growing segment in AI research.

A comprehensive, up-to-date repository, visualization, and analysis of offerings across the graph technology space has been unveiled. New and existing combinations of Graphs and AI are being used to power use cases such as software engineering productivity and supporting enterprise needs at Netflix scale.

New graph database products, features, and benchmarks are available. Use cases as well as research and development on ontologies are on the rise too, including topics such as Enterprise Architecture, visual tooling, and quality assessment for LLM-assisted use of ontologies.

And yet, the most widely discussed topic in the world of graph technology – and beyond – for this past couple of months has been context graphs. So what are context graphs and where do they fit in the graph technology landscape?

In this issue of the Year of the Graph, we explore progress in Ontology, Semantics, Knowledge Graphs, Graph Databases and Analytics, and how these technologies can help define context and power AI.

📋 Table of Contents

- An Introduction to Context Graphs

- Context Beyond Context Graphs

- Ontologies, Context Graphs, and Semantic Layers: What AI Actually Needs in 2026

- Tooling and Evaluation Frameworks for Ontologies

- From Retrieval Augmented Generation to Knowledge Augmented Generation

- Knowledge Graphs in Software Engineering and Enterprise Architecture

- Knowledge Graph Research, Applications and Best Practices

- Knowledge Graph Tools and Platforms

- The State of the Graph Database Market

- Graph Analytics and Graph AI Updates

This issue of the Year of the Graph is brought to you by metaphacts, Graphwise, Connected Thinking, Linkurious, Process Tempo, State of the Graph, Connected Data London, and Pragmatic AI Training.

Why Most Enterprise AI Strategies Fail

Even as AI adoption soars, 80% of enterprises report no return on their AI investments, and 42% end up abandoning their strategies entirely. At the same time, those who pivot away from AI risk accelerating their own obsolescence. The missing link? A knowledge graph with a semantic layer.

By pairing LLMs with a symbolic layer, companies are able to leverage AI and trust that its outputs are contextualized and explainable. This whitepaper dives into how

knowledge graphs provide the necessary structure and grounding that LLMs lack, enabling scalable, future-proof AI strategies.

An Introduction to Context Graphs

Rules tell an agent what should happen in general. Decision traces capture what happened in specific cases. Agents don’t just need rules. They need access to the decision traces that show how rules were applied in the past, where exceptions were granted, how conflicts were resolved, who approved what, and which precedents actually govern reality.

A context graph is the accumulated structure formed by those traces: not “the model’s chain-of-thought,” but a living record of decision traces stitched across entities and time so precedent becomes searchable. Over time, that context graph becomes the real source of truth for autonomy – because it explains not just what happened, but why it was allowed to happen.

This is how Foundation Capital’s Jaya Gupta and Ashu Garg defined context graphs, claiming they will be the single most valuable asset for companies in the era of AI, and a trillion-dollar opportunity. This thesis sparked an array of follow-ups, both from Gupta and Garg as well as from others.

Some people, like Gartner’s Afraz Jaffri, believe that using context as an adjective to describe a graph is redundant as a graph implicitly holds context. Others, like Graphwise’s Andreas Blumauer, see context graphs as an evolution that builds upon knowledge graphs, adding time and decision lineage.

Todd Blaschka identifies what he calls the Logic Gap in the context graph narrative: the distance between recording a decision and understanding its meaning. While a knowledge graph defines static relationships, a context graph captures operational reality – decision traces, temporal intelligence, and lineage.

When AI architecture lacks a formal knowledge graph foundation, you encounter three critical failures: identity crisis, hallucinated judgment, and context rot, Blaschka notes. Jessica Talisman elaborates further, arguing that “context graph” is a rebranding, and context graphs are great in theory but will require solid knowledge management foundations to become a reality.

Transform Your AI With A Semantic Layer

Enterprises are pouring millions into AI, but without the right foundation, that investment stalls. Graphwise delivers the knowledge graph and semantic AI infrastructure that make enterprise AI ready to scale, trusted, and built to perform.

Recognized by Gartner, named “Data Integration Innovation of the Year” at the 2025 Data Breakthrough Awards, and listed among KMWorld’s 100 Companies That Matter, Graphwise is the industry’s most comprehensive and validated solution.

Get started with Graphwise today to make generative AI reliable and scalable for your business.

Context Beyond Context Graphs

But there are even deeper issues with the way “context” is used, Talisman expands. When a word becomes a billing unit, the concept associated with the word can quickly lose meaning. Are we discussing context relative to tokens or context designed for AI reliability? Is it a graph? A markdown file? A YAML format or schema tables?

To help disambiguate things, the mission of the W3C Context Graphs Community Group is to develop specifications, vocabularies, and best practices for representing and resolving contextual misalignment between global knowledge representations and local interpretation contexts in decision systems and human–AI workflows.

Jason Stanley argues that AI agents need five graphs, and nobody has all of them.

Access graphs map who can reach what. Security graphs map what is exploitable and what the blast radius looks like. Context graphs capture decision trajectories so agents can act on precedent. Action graphs model what operations are legal on what objects under what rules. Knowledge graphs represent entities and relationships across the enterprise.

In more practical terms, Andrea Splendiani, Kurt Cagle, the Glean team and Will Lyon share approaches for implementing context graphs. Splendiani and Cagle offer RDF-based alternatives, while Lyon works with Neo4j. The Glean team share their architecture, based on the premise that “you can’t reliably capture the why; you can capture the how“.

The context graph thesis also became the blueprint for the development of the first stable release of Semantica: an open source framework for building context graphs and decision intelligence layers for AI.

Connected Thinking: From civilizational patterns to the next system

A unique journey of exploration, transformation, companionship, and grounding. A series of interactive seminars on foot, reviving the peripatetic school tradition of ancient thinkers in meta-modern times.

● Civilizational Patterns: An Introduction to Macrohistory.

● The Pulsation of the Commons Hypothesis: How commons-based coordination has pulsed through history as an alternative.

● P2P and the Commons: The emerging logic of peer-to-peer as a post-hierarchical coordination model.

● The Next System: What Can We Know? Mapping the contours of what replaces the exhausted form.

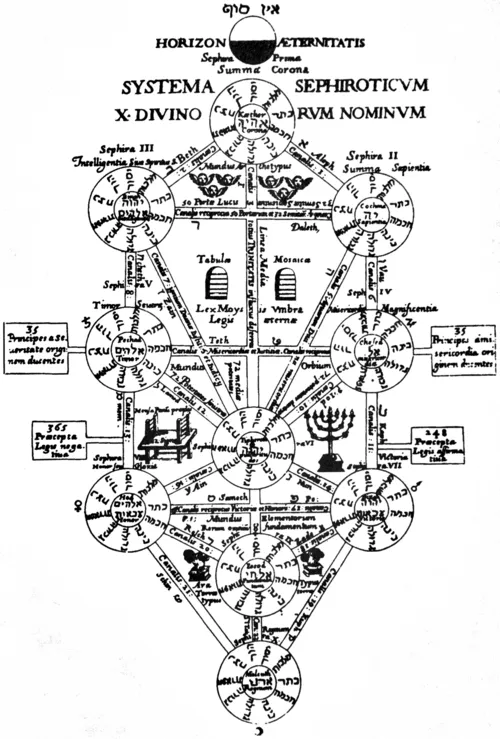

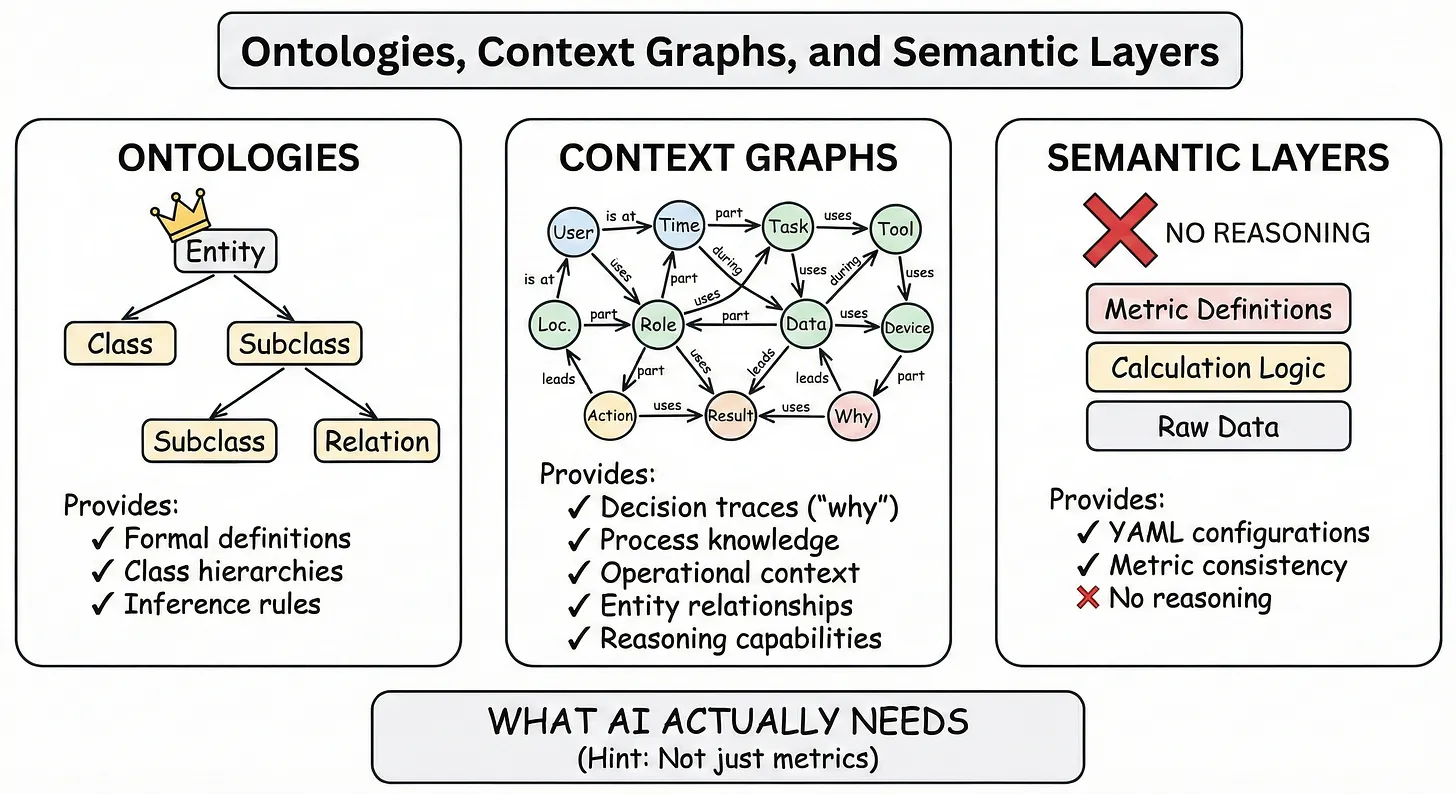

Ontologies, Context Graphs, and Semantic Layers: What AI Actually Needs in 2026

Inevitably, the context graph conversation touches upon ontology as well, in the sense of capturing context in a way that both people and AI can reliably use. As Graphlit’s Kirk Marple frames it, entity ontologies are largely solved by existing standards. The real unsolved work is temporal validity, decision traces, and fact resolution.

The current conversation has crystallized around a dichotomy: prescribed ontologies vs learned ontologies. What’s missing from this framing is a third option that’s been hiding in plain sight: Adopt what exists. Extend where needed. Focus learning on what’s genuinely novel.

Even though ontology is considered part of the fundamentals of Information Systems, with 2026 having been proclaimed the year of the ontology, its origins are in philosophy. The entry for Ontology and Information Systems in the Stanford Encyclopedia of Philosophy provides background and references.

In 2026, ontology is trending because AI agents exposed the gap. Years of pipeline-stacking without caring about meaning landed us here: agents failing precisely where semantic understanding was supposed to live. Connectivity without semantics is just faster error, as Frédéric Verhelst notes in In “Own the Ontology or Rent Your Future“.

Verhelst identifies four capability gaps that make agentic AI ungovernable and proposes the Minimum Viable Ontology approach. Following up, he elaborates on the missing contract: why most boards cannot govern what they cannot define, and how to fix this with semantics and ontology.

The world at large seems to be waking up to the importance of semantics and ontology. Gartner highlighted Data Management, Semantic Layers, and GraphRAG as Top Trends in Data and Analytics for 2026, with Semantic Enrichment recognized as a key capability of Data Management Platforms. Gartner now states that budget for semantic capabilities is non-negotiable.

Bill Inmon, widely recognized as the father of the data warehouse, shared his journey towards semantics and ontology too. Inmon joined forces with Jessica Talisman to introduce some perspectives on ontology, admitting that he never wanted to end up knowing anything about ontology; it was ontology that found him.

Inmon and Talisman followed up with the anatomy of an ontology, where they explore what ontologies look like, how they are structured, and what their defining characteristics and structures are. For people drawn to ontology by the conversation on AI, context graphs and semantic layers, Talisman explores their relationship and what AI needs in 2026.

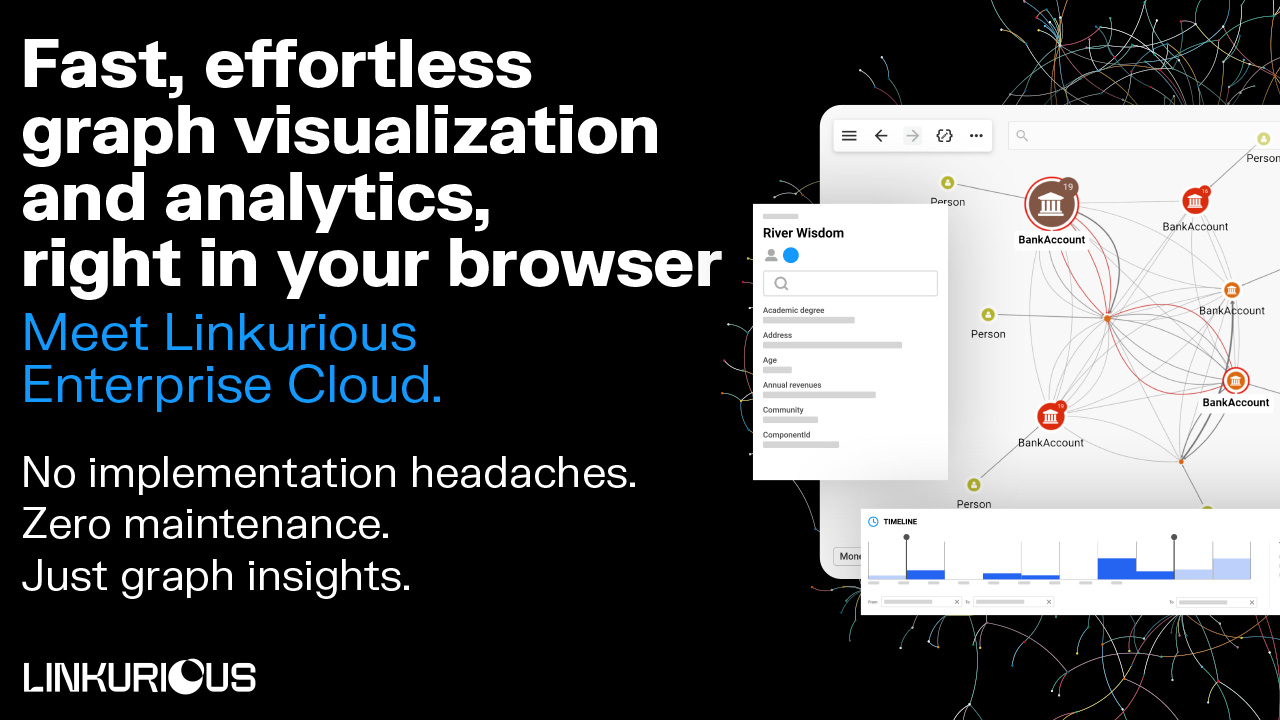

The shortest path between you and graph insights

Graph visualization and analytics just got a lot easier!

Introducing Linkurious Enterprise Cloud: An online solution that brings powerful graph exploration to anyone, right from a browser.

Create an account, connect your graph database, start the exploration of your data, collaborate with your teammates and share your results, all before lunch.

What else?

• Compatibility with leading graph databases

• Zero IT bottlenecks or infrastructure tasks

• Flexible plans that adapt to your needs

The fastest way to start a graph project today — and the easiest way to scale it tomorrow.

Sign up now for a 30-day free trial.

Sign up now for a 30-day free trial.

Tooling and Evaluation Frameworks for Ontologies

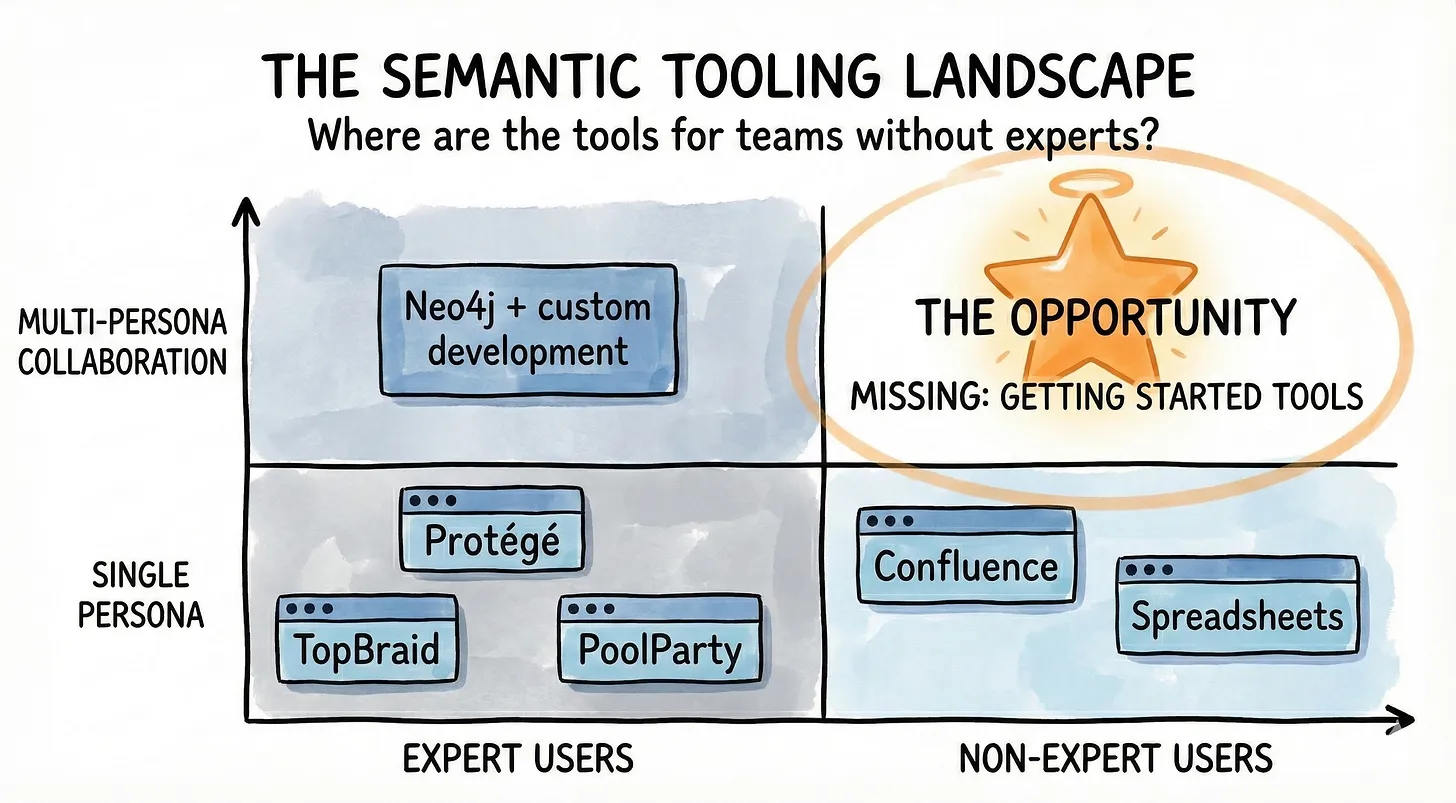

For non-experts, when it comes to implementing semantic artifacts such as ontologies, semantic work may need its Figma moment. Even when people understand why AI depends on semantics and get the buy-in, Anna Bergevin argues that the tools and process are insufficient for solving this problem.

Bergevin notes that currently, semantics tools are built for experts, not for getting started. She identifies a gap in the market, and believes the parallel success story of how design democratized itself without undermining expertise may be instructive. She is not alone in this observation.

Athanassios Hatzis started a conversation on tooling to visualize ontologies, which soon expanded to include ontology editors. Steve Hedden shared a list of free, open-source RDF & ontology visualization tools. New tools for semantic modeling work such as Termboard, OntoBoom, and OntoView are emerging, while others like gra.fo retiring.

Some people may be tempted to get LLMs to write their ontology, but Frédéric Verhelst and Joe Hoeller warn against blindly trusting LLM ontologies – aka “vibe ontologies”. However, like most professionals, knowledge engineers can benefit from using LLMs thoughtfully to assist in their work.

A framework and benchmark for open source LLM-driven ontology construction for enterprise knowledge graphs was presented by Liber AI. More benchmarks for evaluating LLM-generated ontologies were developed, one as a collaboration between LettrIA and EURECOM and another one featuring researchers from German and British universities.

Ontologies are knowledge artifacts, but they’re also software artifacts. Like any software, their quality should be measured in a systematic, operationalizable way. In “Evaluating the Quality of Ontologies“, Neo4j’s Jesús Barrasa and Alexander Erdl reviewed some papers on this topic and implemented some of the ideas they found.

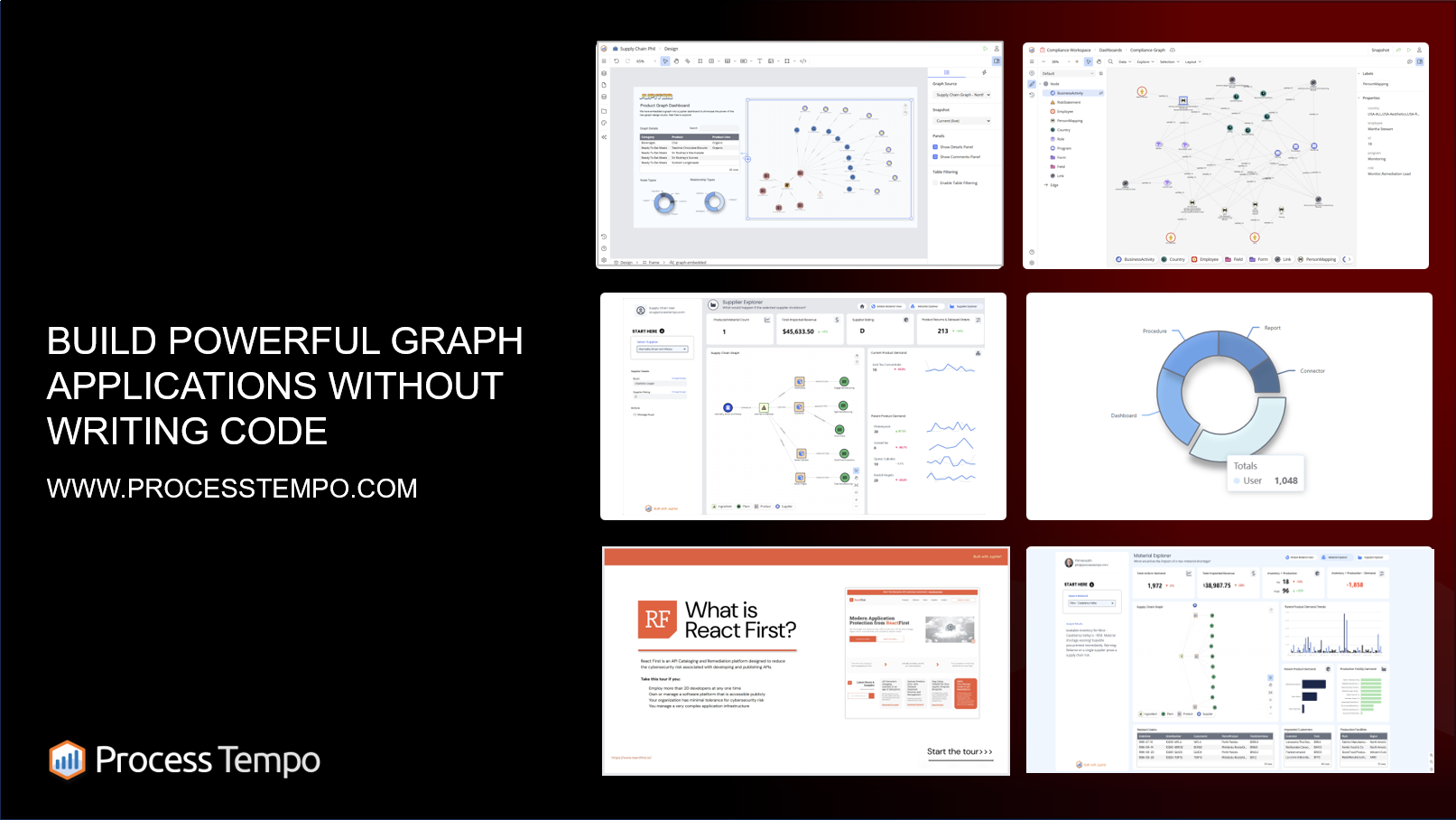

Process Tempo is the missing layer every graph stack needs

Built to accelerate the design, development, and deployment of graph-driven applications, Process Tempo turns your ideas into production-ready solutions faster.

Whether you’re building enterprise knowledge graphs or data intelligence platforms, Process Tempo provides the speed, structure, and flexibility needed to bring your connected ideas to life.

From Retrieval Augmented Generation to Knowledge Augmented Generation

Using ontology in Retrieval Augmented Generation (RAG) is getting traction too. Sergey Vasiliev labels this family of approaches KAG: Knowledge Augmented Generation. Rather than only improving retrieval, the aim is to integrate a knowledge graph as a reasoning substrate. In this view, the graph is not merely a retriever index but a semantic backbone.

In “Enhancing HippoRAG with Graph-Based Semantics“, a team from Graphwise show how an ontology-based knowledge graph boosts the multi-hop Q&A accuracy of a leading schemaless GraphRAG system. Replacing generic graph construction with strict ontologies and structured knowledge graphs transforms HippoRAG from an associative engine into a reasoning engine.

Granter research compared a variety of approaches: standard vector-based RAG, GraphRAG, and retrieval over knowledge graphs built from ontologies derived either from relational databases or textual corpora. Results show that ontology-guided knowledge graphs incorporating chunk information achieve competitive performance with state-of-the-art frameworks.

That’s not to say that other RAG and GraphRAG approaches have gone away. Raphaël MANSUY elaborates on why classic RAG doesn’t work and what to do about it, as a preamble to introducing EdgeQuake: a high performance open source Graph-RAG framework in Rust.

MegaRAG automatically builds knowledge graphs from visual documents. And Graphcore Research published UltRAG: a Universal Simple Scalable Recipe for Knowledge Graph RAG.

A group of Chinese researchers published a survey of Graph Retrieval-Augmented Generation. A systematic survey of GraphRAG, with workflow formalization, downstream tasks, application domains, evaluation methodologies, industrial use cases, and an open source repository.

Google published a guide to building GraphRAG agents with Google’s Agent Development Kit. This hands-on tutorial demonstrates how to create intelligent agents that understand data context through graph relationships and deliver highly accurate query responses.

Steve Hedden explores the rise of context engineering and semantic layers for Agentic AI. He notes that RAG may have been necessary for the first wave of enterprise AI, but it’s evolving into something larger. Neo4j’s Alex Gilmore wrote the Text2Cypher Guide, elaborating on when and how to implement Text2Cypher in agentic applications.

State of the Graph

A comprehensive, up-to-date repository, visualization, and analysis of offerings across the graph technology space.

• Tech professionals exploring graph tools, platforms, and architectures

• Analysts and investors tracking market trends

• Vendors and builders seeking a clear, inclusive map to position their innovations

Knowledge Graphs in Software Engineering and Enterprise Architecture

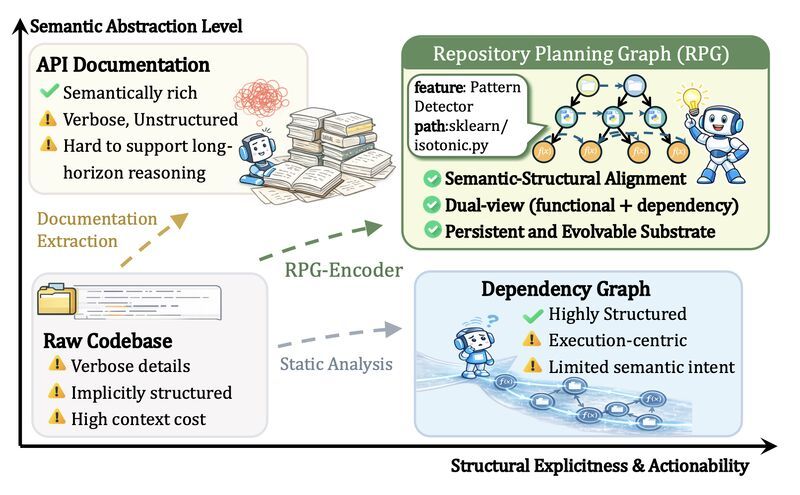

Bala Adithya Malaraju was trying to apply a GraphRAG architecture to his codebase, but running against issues. Then he decided to stop letting LLMs build his knowledge graphs, and adopted the Fixed Entity Architecture. The core idea is simple: instead of letting a LLM discover your ontology from scratch, you define it yourself.

This is just one application of knowledge graphs and ontology in the domain that’s probably seeing the bigest impact from AI: software engineering. There are many more. Amir Hosseini evaluates Codebase-Oriented RAG through Knowledge Graph analysis, using Code-Graph-RAG and gdotv.

GitNexus turns a repository into an AST-driven knowledge graph directly in the browser. session-graph turns scattered AI coding sessions into a queryable knowledge graph. Repository Planning Graph Encoder creates a unified, high fidelity representation for AI-assisted coding.

Repolex offers semantic code intelligence through RDF knowledge graphs. Code review graph creates a local knowledge graph for Claude Code. pr-split decomposes large PRs into a Directed Acyclic Graph of small, reviewable stacked PRs, and gitCGR instantly visualises any GitHub repo as a graph.

But ultimately software engineering is just one part of Enterprise Architecture. What if ontology could revitalize Enterprise Architecture?

This is the question driving Alberto D. Mendoza’s conversion of ArchiMate 3.2 to an RDF Ontology. Enterprise Architecture frameworks like TOGAF, DoDAF, and FEAF have long used ArchiMate: an open, vendor-neutral, standardized graphical modeling language used to describe, analyze & visualize architectures.

The problem is that after ArchiMate diagrams are created they are flattened, saved as a PDF, and the knowledge it took so long to collect is frozen. But ArchiMate is more than a drawing standard: it’s a formal language with precisely defined element types and relationship semantics.

Elements could be stored in a model that is governed, referenced, and evolves over time rather than recreated from scratch. But EA tools store this information in relational tables, so EA becomes a roadblock. Graphs are the obvious fix. RDF/OWL was designed for rich knowledge representation, so this seems like a natural match.

Connected Data London 2026

10 Years Connecting Data, People and Ideas

🎤 Keynote: William Tunstall-Pedoe, the pioneer behind Amazon Alexa

🔹 Malcolm Hawker – Thought leader, CDO Profisee

🔹 Juan Sequeda – Principal Fundamental Researcher, ServiceNow

🔹 Jessica Talisman – Semantic Architect, Founder of The Ontology Pipeline

Knowledge Graph Research, Applications and Best Practices

Similar to how software engineering is a premium application domain for knowledge graphs, graph is emerging as the fastest growing segment in AI research. Graph was a significant part of NeurIPS 2025, signifying its growing importance and market share.

Dan McGrath’s findings reinforce this. McGrath tracked the raw growth of graph-related research against the baseline of all AI papers from 2023 to present. The results show a clear acceleration, with a turning point in 2024, when graph became the fastest growing segment in AI research.

Real-world applications abound as well, as shown in Juan Sequeda’s Connected Data London 2025 trip report. A knowledge graph conference where every single talk came from businesses. Not by vendors. Not POCs. Real production deployments with mature architectures and well thought out roles and processes.

Sequeda has been a knowledge graph builder and advocate for decades. He shared 20 lessons from 20 years of building ontologies and knowledge graphs, and he will be back to Connected Data London 2026 as part of an initial lineup also featuring William Tunstall-Pedoe, Malcolm Hawker and Jessica Talisman.

Veronika Heimsbakk wrote a series of posts for data engineers looking to understand knowledge graphs. Kicking off with the motivation – why you should care about knowledge graphs – she elaborates on data engineering ontologies, a few elementary pieces on logic, and shares a translation guide – SPARQL for SQL developers.

Ashleigh Faith also has decades of experience modeling knowledge graphs and ontology. She shares her top 10 modeling tips for ontology and graph. While her tips have a heavy focus on RDF-based graph models, the principles are deep enough to be useful for almost any graph data modeling project.

The debate between the RDF and Labelled Property Graph (LPG) graph data models is ongoing. Sergey Vasiliev explains Property Graph and LPG as structural and applied semantic models, places RDF in its role as a general semantic framework, and formally analyses the relationships between them. He argues RDF is a knowledge representation model and LPG is decision infrastructure.

Niklas Emegård shares a no-ontology hack to show that you don’t need to spend weeks data modeling to start building a RDF knowledge graph, and Pieter Colpaert argues for eventual interoperability– avoiding getting stuck on making trade-off decisions and having to wait for consensus.

Pragmatic AI Training

From Data Literacy to Data Science and Pragmatic AI

For those who want to understand the first principles of AI, and learn how to use it to get results.

• Created through extensive experience

• Designed for busy professionals

• Validated by global leaders

• Delivered on-site

Knowledge Graph Tools and Platforms

If you are looking for knowledge graph tools and platforms you can use, there are some resources to help there too.

knwler turns documents into structured knowledge, extracting entities, relationships, and topics. Knowledge Graph Toolkit (KGTK) is a Python library for easy manipulation with knowledge graphs. graflo is a framework for transforming tabular and hierarchical data into property graphs and ingesting them into graph databases.

The State of the Graph is a comprehensive, up-to-date repository, visualization, and analysis of offerings across the graph technology space. The State of the Graph knowledge graph catalog brings together dedicated platforms, infrastructure providers, and knowledge‑centric search and management tools so you can see who is doing what, where they overlap, and where they differ.

TopBraid’s Steve Hedden created Open Knowledge Graphs – a search engine for ontologies, controlled vocabularies, and Semantic Web tools. Ítalo Oliveira created a shortlist of Conceptual Modeling and Linked Data Tools. And Michael Hoogkamer created an interactive taxonomy of semantic modeling concepts.

Taxonomists have a role in the new world of Generative AI, and Yumiko Saito reflects on it. Kurt Cagle explores how to make taxonomies (and knowledge graphs in general) more friendly for LLMs.

To use LLMs with knowledge graphs, Fanghua (Joshua) Yu proposes Generative Knowledge Modeling (GenKM): a comprehensive methodology introducing a modular four-stage architecture that unifies 40+ existing Graph RAG systems under a common formalism, a generative operator algebra, and the GenKG Lifecycle for end-to-end knowledge graph governance.

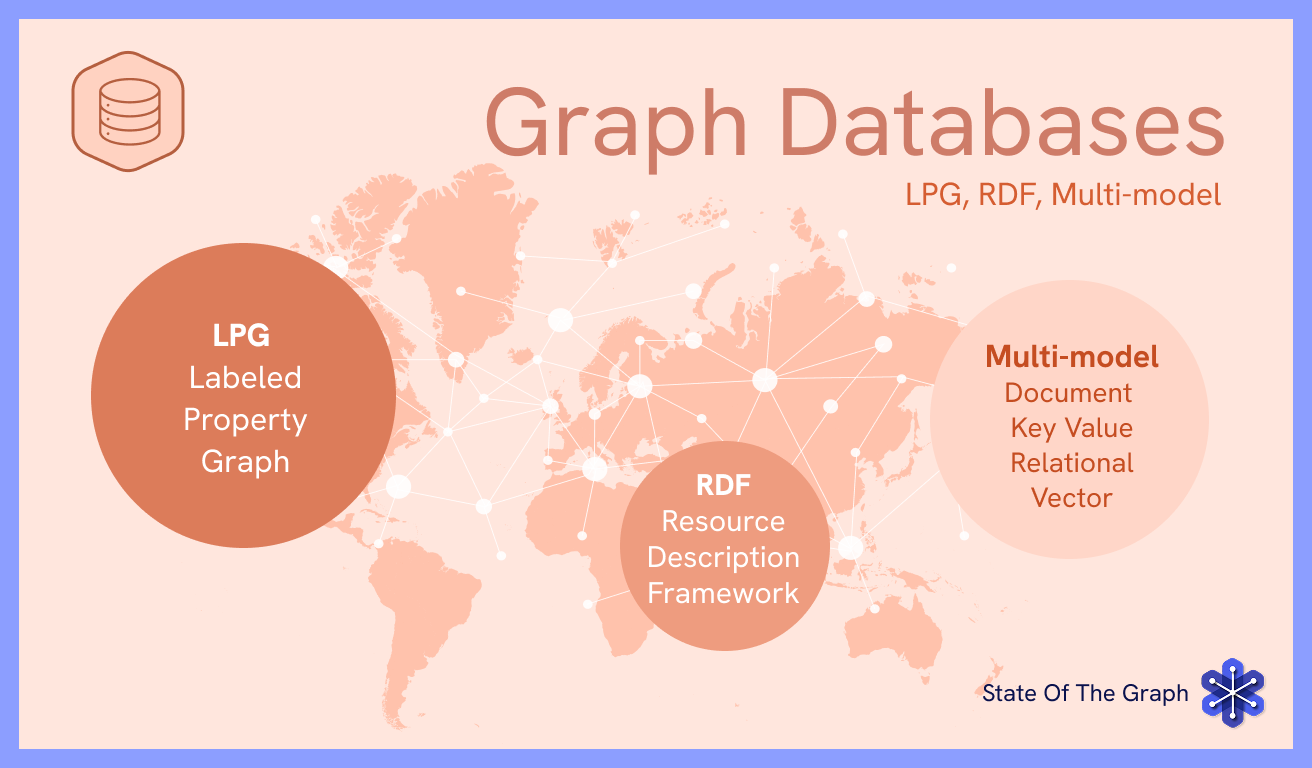

The State of the Graph Database Market

The graph database market is growing, with more competition among vendors and more options for users. The State of the Graph catalog of graph databases is an attempt to present the market in a single, structured, vendor‑inclusive view. It aims to enable users to see how graph databases compare across different features.

There are more than 50 graph databases listed on the State of the Graph catalog. But Jason Saltzman, Head of Insights at CB Insights, notes that like cloud infrastructure before it, databases are moving from broad experimentation to standardization around a few critical workloads. As that happens, the market becomes far less forgiving.

Saltzman calls out Neo4j, noting that their momentum reflects scale and defensibility: $200M ARR, 84% Fortune 100 penetration, and accelerating GraphRAG adoption, contributing to one of the highest IPO probabilities CB Insights tracks.

Sudhir Hasbe, Neo4j CPO, elaborates on Neo4j’s evolving architectural evolution in 2025, and shares a roadmap for 2026. Notably, this includes “Ontologies as a First-Class Citizen”: a top-level, independent modeling tool with a repository of use-case-specific samples and native graph schema enforcement. The latest version of Neo4j introduces support for schema as a preview feature.

The State of the Graph database catalog offers a single access point for browsing, comparing, and choosing the offering that is right for your needs.

We saw mobility in the graph database landscape, with new vendors and releases.

TuringDB released Community Version, an open-source edition of its high-performance, versioned graph database. AllegroGraph released v8.5, combining knowledge graphs, vector embeddings, and neurosymbolic reasoning. Memgraph released Atomic GraphRAG Pipelines, implementing sophisticated pipelines as atomic database queries.

SurrealDB released version 3.0, bringing improvements on stability, performance and tooling, developer experience, and building AI agents, while also raising a $23M Series A extension. Vela Partners released a new fork of KuzuDB and added concurrent multi-writer support.

Apparently KuzuDB was acquired by Apple, creating a gap in the graph database ecosystem. In addition to KuzuDB forks, Grafeo is a new embedded graph database built in Rust. Samyama is another distributed graph database written in Rust, which recently released v0.6.1.

Both Grafeo and Samyama highlight LDBC benchmarks results. The Graph Data Council (GDC), formerly known as the Linked Data Benchmark Council (LDBC), is a non-profit organization that defines standard graph benchmarks and fosters a community around graph processing technologies.

There are more benchmarks and updates for the Gremlin ecosystem. LDBC SNB Interactive for TinkerPop is a Gremlin-based implementation of the LDBC Social Network Benchmark (SNB) Interactive v1 workload. TinkerBench is a benchmarking tool designed for graph databases based on Apache TinkerPop. And the Second Edition of Practical Gremlin: An Apache TinkerPop Tutorial was published.

Graph Analytics and Graph AI Updates

The Graph Analytics market is projected to grow from USD 2.41 billion in 2025 to USD 2.92 billion in 2026 (21.61% CAGR), on track to reach USD 9.49 billion by 2032. The State of the Graph catalog for graph analytics offers a single access point for browsing, comparing, and selecting graph analytics tools that match your needs.

A noteworthy entry in the graph analytics market is Google BigQuery Graph. BigQuery Graph, currently in private preview, enables users to query at scale, unify data, and visualize insights, while supporting the Graph Query Language (GQL).

Netflix leverages graph analytics too. The team shared how and why Netflix built a real-time distributed graph and how they created a high-throughput graph abstraction. The next step was the AI evolution of graph search at Netflix, going from structured queries to natural language.

ClickHouse and PuppyGraph introduce the LakeHouse Graph concept: Zero-Copy graph analytics, querying relationships directly on existing data without ETL to a graph database. DuckDB also offers graph analytics now via Onager.

Graphina is a graph data science library for Rust. It provides common data structures and algorithms for analyzing real-world networks, such as social, transportation, and biological networks. The Neo4j blog offers background and examples on some of the most common graph analytics algorithms – Louvain, Jaccard, and PageRank.

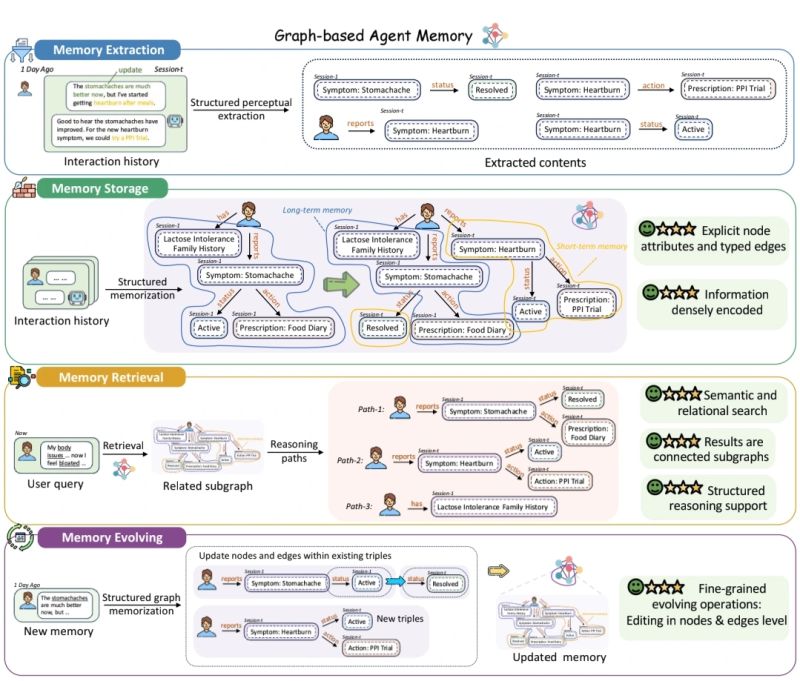

Graph AI is being redefined by the advent of graph memory for AI agents. “Graph-based Agent Memory: Taxonomy, Techniques, and Applications” presents a comprehensive review of agent memory from the graph-based perspective. Cognee, an open source AI memory engine that turns data into a living knowledge graph, raised $7.5M seed funding to build memory for AI agents.

Himanshu jha elaborates on a parallel between how Transformers changed sequence modeling and how Graph Transformers might be changing graph learning, framing the shift from GNNs to Graph Transformers through the lens of the Transformer revolution.

Graphbench is a comprehensive graph learning benchmark across domains and prediction regimes. GraphBench standardizes evaluation, and includes a unified hyperparameter tuning framework, and provides strong baselines with state-of-the-art message-passing and graph transformer models and easy plug-and-play code.

Graph Billion- Foundation-Fusion (GraphBFF) is the first end-to-end recipe for building billion-parameter Graph Foundation Models (GFMs) for arbitrary heterogeneous, billion-scale graphs. Central to the recipe is the GraphBFF Transformer, a flexible and scalable architecture designed for practical billion-scale GFMs.

.png)